High-dimensional data often has far less intrinsic variation than the dimension would suggest. A class of students’ grade transcripts, or a collection of images, may vary along just a few underlying “axes.” Latent variable models make this intuition precise by positing that the high-dimensional observations are generated from a low-dimensional latent variable with .

In this chapter we study the simplest such models, where the relationship between and is linear and all distributions are Gaussian:

Principal Components Analysis (PCA): two classical optimization formulations and their connection to the eigenvectors of the sample covariance matrix

Probabilistic PCA (PPCA): PCA as the maximum likelihood solution to a Gaussian latent variable model, enabling Bayesian inference

Factor Analysis (FA): a generalization of PPCA with per-dimension noise variances

Other linear LVMs: Independent Components Analysis (ICA) and Probabilistic Canonical Correlation Analysis (PCCA)

Source

import torch

import matplotlib.pyplot as pltPrincipal Components Analysis¶

Motivation¶

Suppose we observe grade transcripts where is a vector of grades for student . We might want to:

Reduce dimensionality: are there a few axes of variation?

Visualize: embed the points in 2–3 dimensions for plotting.

Compress: summarize each student’s record compactly.

PCA addresses all three. There are two classical equivalent formulations.

Maximum Variance Formulation¶

Goal: project the data onto an -dimensional subspace while maximizing the variance of the projected data.

For , let be a unit vector defining the subspace. The projection of is the scalar (the score or embedding). Its variance over the data points is:

where is the sample mean and is the sample covariance matrix.

Maximizing subject to via a Lagrange multiplier gives , so must be an eigenvector of . Left-multiplying by shows the projected variance equals , so we should choose the eigenvector with the largest eigenvalue.

More generally, the -dimensional principal subspace is spanned by the eigenvectors with the largest eigenvalues .

Linear Autoencoder Formulation¶

Goal: find an orthogonal matrix (with ) that minimizes mean squared reconstruction error.

We encode as and decode as . The loss is:

Minimizing is equivalent to maximizing , which is again solved by , the leading eigenvectors.

PCA and the Singular Value Decomposition¶

Let where has rows . Then .

The SVD of reveals:

Right singular vectors of (columns of ) = eigenvectors of = principal components.

Squared singular values = eigenvalues of .

In practice, PCA is computed via SVD of rather than by eigendecomposing , since SVD is numerically more stable.

Explained Variance¶

The fraction of total variance captured by principal components is:

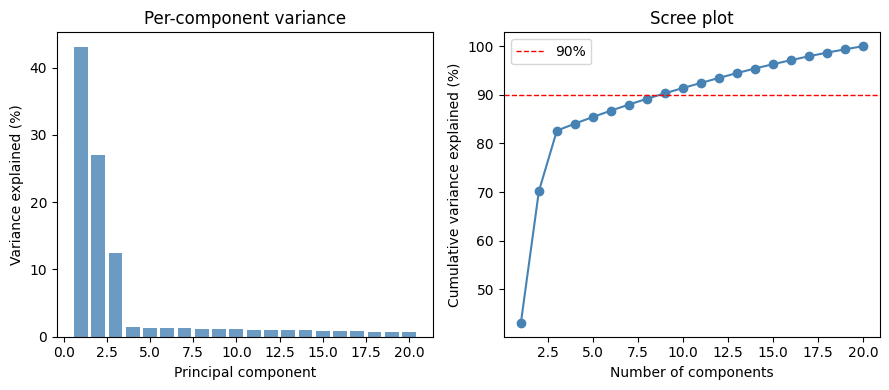

A scree plot shows this quantity (per-component and cumulative) as a function of . Below we generate synthetic data from a rank-3 linear Gaussian model and visualize the scree plot.

# Generate synthetic data from a rank-3 linear Gaussian model

torch.manual_seed(305)

N, D, M_true = 300, 20, 3 # datapoints, features, true latent dims

W_true = torch.randn(D, M_true) # D x M weight matrix

Z_true = torch.randn(N, M_true) # N x M latent variables

σ2 = torch.tensor(0.5) # noise variance

X = Z_true @ W_true.T + torch.sqrt(σ2) * torch.randn(N, D)

# Centre the data and compute PCA via SVD

μ = X.mean(0) # sample mean, shape (D,)

Xc = X - μ # centred data, shape (N, D)

Y = Xc / N**0.5 # Y.T @ Y = sample covariance S

_, s, Vt = torch.linalg.svd(Y, full_matrices=False)

UM = Vt.T # principal components, columns of UM

λ = s**2 # eigenvalues of S (variances)Source

fig, axs = plt.subplots(1, 2, figsize=(9, 4))

pct = 100 * λ / λ.sum()

axs[0].bar(range(1, D+1), pct.numpy(), color='steelblue', alpha=0.8)

axs[0].set_xlabel('Principal component')

axs[0].set_ylabel('Variance explained (%)')

axs[0].set_title('Per-component variance')

axs[1].plot(range(1, D+1), torch.cumsum(pct, 0).numpy(), 'o-', color='steelblue')

axs[1].axhline(90, color='r', ls='--', lw=1, label='90%')

axs[1].set_xlabel('Number of components')

axs[1].set_ylabel('Cumulative variance explained (%)')

axs[1].set_title('Scree plot')

axs[1].legend()

plt.tight_layout()

Probabilistic PCA¶

Model¶

The two classical formulations above cast PCA as an optimization problem. Probabilistic PCA (PPCA) instead defines a joint distribution over and a latent variable :

where are the loadings (analogous to the principal components), is the mean, and is an isotropic noise variance.

Equivalently, with .

The marginal distribution of is:

a Gaussian with low-rank plus diagonal covariance — only parameters instead of .

Maximum Likelihood Estimation¶

The marginal log-likelihood is:

Tipping and Bishop (1999) showed the MLE has a closed form:

where contains the leading eigenvectors of the sample covariance , , and is an arbitrary orthogonal matrix.

The weights are identifiable only up to orthogonal rotation — only the subspace spanned by is uniquely determined. The MLE noise variance is the average variance discarded in the trailing dimensions.

Posterior Distribution on Latent Variables¶

Given parameters , , , the posterior on combines the Gaussian prior with the Gaussian likelihood:

where

Note that the posterior precision matrix is the same for all ; only the information vector varies. The system is much cheaper to invert than the marginal covariance.

Zero-noise limit: as , the posterior mean converges to , the ordinary least-squares projection — identical to classical PCA (up to scaling).

# MLE for PPCA with M components

M_fit = 3

UM_fit = Vt[:M_fit].T # D x M leading principal components

ΛM = torch.diag(λ[:M_fit]) # M x M eigenvalue matrix

σ2_ML = λ[M_fit:].mean() # MLE noise variance

# MLE weights (orthogonal rotation R = I)

W_ML = UM_fit @ (ΛM - σ2_ML * torch.eye(M_fit)).sqrt()

# Posterior on latent variables

J = torch.eye(M_fit) + (1/σ2_ML) * W_ML.T @ W_ML # M x M precision

Σz = torch.linalg.inv(J) # M x M posterior cov

H = Xc @ W_ML / σ2_ML # N x M info vectors

Z_hat = H @ Σz.T # N x M posterior means

print(f'MLE noise variance σ² = {σ2_ML:.3f} (true = {σ2.item():.3f})')

print(f'Posterior covariance Σz:\n{Σz.numpy().round(3)}')MLE noise variance σ² = 0.483 (true = 0.500)

Posterior covariance Σz:

[[ 0.024 0. -0. ]

[ 0. 0.038 0. ]

[-0. 0. 0.082]]

Source

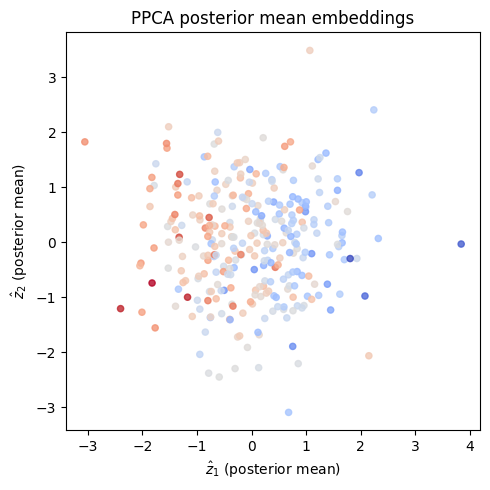

# Visualize the 2D posterior mean embedding

fig, ax = plt.subplots(figsize=(5, 5))

ax.scatter(Z_hat[:, 0].numpy(), Z_hat[:, 1].numpy(),

c=Z_true[:, 0].numpy(), cmap='coolwarm', s=20, alpha=0.8)

ax.set_xlabel(r'$\hat{z}_1$ (posterior mean)')

ax.set_ylabel(r'$\hat{z}_2$ (posterior mean)')

ax.set_title('PPCA posterior mean embeddings')

plt.tight_layout()

Gibbs Sampling for Probabilistic PCA¶

If we want a fully Bayesian treatment, we place a prior on the parameters. For simplicity assume (center the data first) and put:

where is the -th row of .

Complete conditional for each row : combining the row prior with the likelihoods for the -th output gives a Gaussian:

where

Complete conditional for : conjugate with the scaled inverse chi-squared family:

Complete conditional for : same as the PPCA posterior derived above.

Factor Analysis¶

Factor analysis (FA) relaxes the isotropic noise assumption of PPCA by allowing each output dimension to have its own noise variance:

where . The marginal covariance is — still low-rank plus diagonal, but with independent noise scales instead of one.

With the per-dimension prior:

the Gibbs updates for each pair are identical to those for PPCA but with in place of the shared . All rows are independent given , so they can be updated in parallel.

Other Linear Latent Variable Models¶

Independent Components Analysis¶

ICA replaces the Gaussian latent prior with a product of non-Gaussian marginals:

The non-Gaussian prior is essential: if were Gaussian, any correlation in could be absorbed into , reducing the model to factor analysis. A common choice is a Laplace (heavy-tailed) prior, , which encourages the latent factors to be sparse.

ICA is widely used in signal processing (e.g., separating audio sources) and neuroscience.

Probabilistic Canonical Correlation Analysis¶

Suppose we observe two data modalities, and . Probabilistic CCA posits:

The shared latent variables capture common structure across modalities; private variables and capture modality-specific variation.

Bach & Jordan, 2005 showed that the MLE recovers the classical CCA solution: the shared weights and correspond to the left and right singular vectors of the sample cross-correlation matrix .

Conclusion¶

This chapter developed a family of linear Gaussian latent variable models with progressively richer structure:

| Model | Noise covariance | Latent prior |

|---|---|---|

| PPCA | (isotropic) | |

| Factor analysis | (diagonal) | |

| ICA | (non-Gaussian) | |

| PCCA | (per modality) | shared + private Gaussians |

Key takeaways:

PCA is the MLE for PPCA: the principal components are the leading eigenvectors of the sample covariance, computed efficiently via SVD.

The posterior on latent variables requires only inverting an system (not ), making inference tractable.

Gibbs sampling for PPCA / FA reduces to iterated Bayesian linear regressions — one per output dimension.

The non-Gaussian prior in ICA breaks the rotational indeterminacy of FA and enables recovery of independent source signals.

- Bach, F. R., & Jordan, M. I. (2005). A probabilistic interpretation of canonical correlation analysis.

- Bishop, C. M. (2006). Pattern recognition and machine learning. Springer.