Source

import torch

from torch.distributions import Gamma, TransformedDistribution, Normal, StudentT

from torch.distributions.transforms import PowerTransform

import matplotlib.pyplot as plt

from matplotlib.cm import BluesNormal Model with Unknown Mean¶

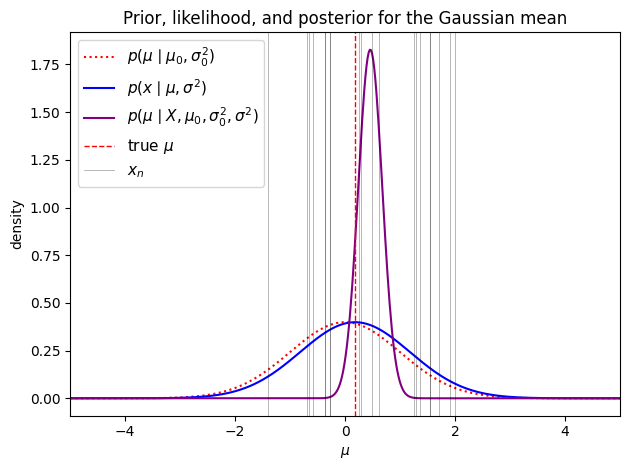

Let’s start with the simplest nontrivial Bayesian model to get a feel for how the machinery works.

We place a Gaussian prior on and model each score as an i.i.d. draw from a Gaussian with that mean:

The hyperparameters are : the prior mean , prior variance , and (known) data variance . The prior variance encodes how uncertain we are about the mean before seeing any data — a large corresponds to a vague, spread-out prior.

Computing the Posterior¶

By Bayes’ rule, the posterior is proportional to the prior times the likelihood. Expanding the Gaussian densities,

Since all factors are exponentials of quadratics in , the product is also an exponential of a quadratic in — which means the posterior is Gaussian. This is what it means for the Gaussian prior to be conjugate to the Gaussian likelihood.

To identify the posterior, we collect the terms quadratic and linear in in the exponent:

where we define the posterior precision and posterior information,

The precision is simply the sum of the prior precision and the total data precision — information is additive. The information vector similarly sums contributions from the prior and each data point.

Completing the Square¶

The exponent is a quadratic in . We can complete the square to convert it to the form , where does not depend on . Specifically:

Dropping the constant and recognizing and , we find

where

with being the sample mean (and maximum likelihood estimate).

Interpreting the Posterior¶

The posterior mean is a weighted average of the prior mean and the MLE . The weights are determined by the relative precision of the prior versus the data. When is large, almost all weight goes to the data and . When the prior is diffuse (, i.e., an uninformative prior), the prior precision vanishes and again .

The posterior variance follows a simpler pattern via the precision:

Each new observation contributes of additional precision. As , the posterior variance shrinks to zero — we become increasingly certain about . Under an uninformative prior (), the posterior variance becomes , the familiar sampling variance of the mean.

Source

torch.manual_seed(305)

# Model hyperparameters and true parameter

μ0 = torch.tensor(0.0)

σ2_0 = torch.tensor(1.0)

σ2 = torch.tensor(1.0)

# Simulate data

N = 20

μ = Normal(μ0, torch.sqrt(σ2_0)).sample()

X = Normal(μ, torch.sqrt(σ2)).expand([N]).sample()

# Compute posterior parameters

J_N = 1 / σ2_0 + N / σ2

h_N = μ0 / σ2_0 + X.sum() / σ2

μ_N = h_N / J_N

σ2_N = 1 / J_N

# Plot prior, likelihood, and posterior

prior = Normal(μ0, torch.sqrt(σ2_0))

lkhd = Normal(μ, torch.sqrt(σ2))

post = Normal(μ_N, torch.sqrt(σ2_N))

grid = torch.linspace(-5, 5, 500)

fig, ax = plt.subplots()

ax.plot(grid, torch.exp(prior.log_prob(grid)),

':r', label=r"$p(\mu \mid \mu_0, \sigma_0^2)$")

ax.plot(grid, torch.exp(lkhd.log_prob(grid)),

'-b', label=r"$p(x \mid \mu, \sigma^2)$")

ax.plot(grid, torch.exp(post.log_prob(grid)),

'-', color='purple', label=r"$p(\mu \mid X, \mu_0, \sigma_0^2, \sigma^2)$")

ax.axvline(μ.item(), color='r', linestyle='--', lw=1, label=r"true $\mu$")

for n, x in enumerate(X):

ax.axvline(x.item(), color='k', lw=0.5, alpha=0.4,

label=r"$x_n$" if n == 0 else None)

ax.set_xlabel(r"$\mu$")

ax.set_ylabel("density")

ax.set_xlim(-5, 5)

ax.legend(fontsize=11)

ax.set_title("Prior, likelihood, and posterior for the Gaussian mean")

plt.tight_layout()

Normal Model with Unknown Precision¶

Now suppose the mean is known but the variance is not. Our calculations are slightly cleaner if we work with the precision rather than the variance directly. In terms of the precision, the Gaussian density is,

What prior should we place on ? We need a distribution on (since ), ideally one that is conjugate to the Gaussian likelihood. The chi-squared distribution fits the bill naturally.

The Chi-Squared Distribution¶

Let and define . Then follows a chi-squared distribution with degrees of freedom,

with pdf

This is a special case of the gamma distribution: using the rate parameterization. Its mean is and its variance is .

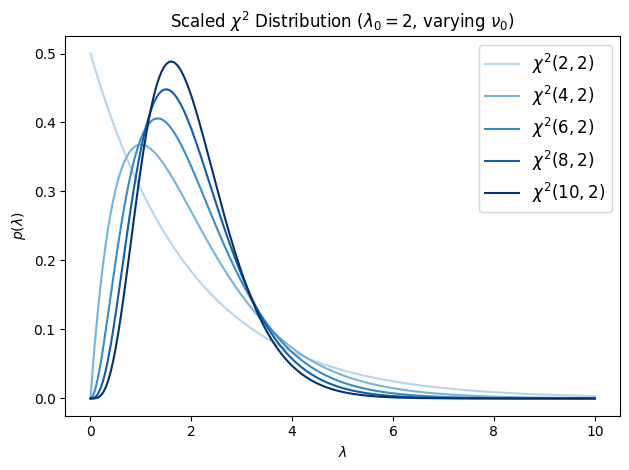

The Scaled Chi-Squared Distribution¶

The standard chi-squared has mean , which grows with the degrees of freedom. For a prior over precision, it is more convenient to control the mean independently of the degrees of freedom. We do this by drawing from a scaled version: let and define (note the average rather than the sum). Then we say

where is the scale parameter. Since with , we have , so where . Using the fact that the gamma distribution is closed under multiplicative scaling, , giving the pdf

The mean is regardless of , and the variance is . The parameter controls concentration: larger gives a tighter distribution around its mean .

Source

class ScaledChiSq(Gamma):

"""Scaled chi-squared distribution χ²(ν, λ).

Equivalent to Ga(ν/2, ν/(2λ)) using the rate parameterisation.

The mean is λ and the variance is 2λ²/ν.

"""

def __init__(self, ν, λ):

Gamma.__init__(self, ν / 2, ν / (2 * λ))

self.ν = ν

self.λ = λ

λ0 = torch.tensor(2.)

grid = torch.linspace(1e-3, 10, 200)

dofs = [2, 4, 6, 8, 10]

fig, ax = plt.subplots()

for i, ν in enumerate(dofs):

chisq = ScaledChiSq(torch.tensor(float(ν)), λ0)

ax.plot(grid, torch.exp(chisq.log_prob(grid)),

label=r"$\chi^2({}, {})$".format(ν, int(λ0)),

color=Blues(0.3 + 0.7 * i / (len(dofs) - 1)))

ax.legend(fontsize=12)

ax.set_xlabel(r"$\lambda$")

ax.set_ylabel(r"$p(\lambda)$")

ax.set_title(r"Scaled $\chi^2$ Distribution ($\lambda_0=2$, varying $\nu_0$)")

plt.tight_layout()

Conjugate Update¶

With the scaled chi-squared prior, the model is

The chi-squared distribution is conjugate to the Gaussian likelihood in . Letting , we compute:

This is another scaled chi-squared distribution, , where

The posterior degrees of freedom grow by one per observation. As (an uninformative prior), the posterior mean converges to , the inverse of the sample variance — as expected.

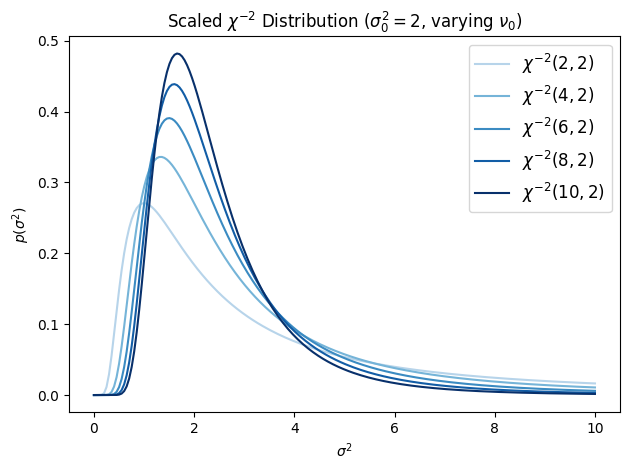

Normal Model with Unknown Variance¶

Working with the precision is mathematically natural, but it can be more intuitive to parameterize the model in terms of the variance . If , then the change-of-variables formula gives the distribution of :

where . This is the scaled inverse chi-squared distribution. It is a special case of the inverse gamma: .

The mean is (for ) and the mode is . The parameter is the prior “best guess” for the variance and controls how strongly that guess is held.

Source

class ScaledInvChiSq(TransformedDistribution):

"""Scaled inverse chi-squared: χ⁻²(ν, σ²).

Equivalent to IGa(ν/2, νσ²/2) using the rate parameterisation.

The mean is σ²·ν/(ν-2) and the mode is σ²·ν/(ν+2).

"""

def __init__(self, ν, σ2):

base = Gamma(ν / 2, ν * σ2 / 2)

TransformedDistribution.__init__(self, base, [PowerTransform(-1)])

self.ν = ν

self.σ2 = σ2

σ2_0 = torch.tensor(2.)

grid = torch.linspace(1e-3, 10, 200)

dofs = [2, 4, 6, 8, 10]

fig, ax = plt.subplots()

for i, ν in enumerate(dofs):

inv_chisq = ScaledInvChiSq(torch.tensor(float(ν)), σ2_0)

ax.plot(grid, torch.exp(inv_chisq.log_prob(grid)),

label=r"$\chi^{{-2}}({}, {})$".format(ν, int(σ2_0)),

color=Blues(0.3 + 0.7 * i / (len(dofs) - 1)))

ax.legend(fontsize=12)

ax.set_xlabel(r"$\sigma^2$")

ax.set_ylabel(r"$p(\sigma^2)$")

ax.set_title(r"Scaled $\chi^{{-2}}$ Distribution ($\sigma_0^2=2$, varying $\nu_0$)")

plt.tight_layout()

Parameterized in terms of the variance, the model is

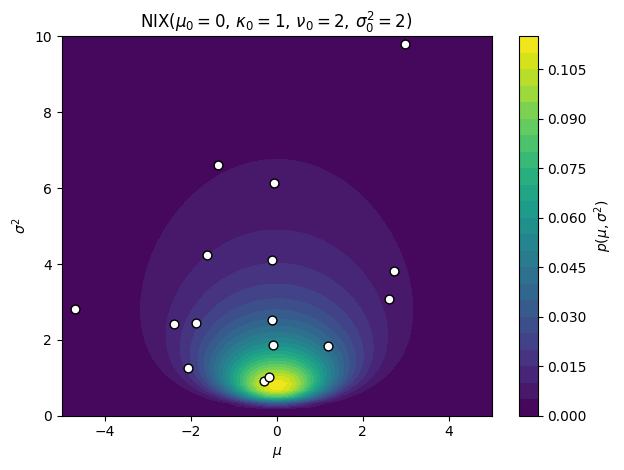

Normal Model with Unknown Mean and Variance¶

Finally, suppose both and are unknown. We want a joint conjugate prior over . The key insight is to write the prior as a product : an inverse chi-squared prior on the variance, and conditional on the variance, a Gaussian prior on the mean whose variance scales with . Specifically,

This is the normal-inverse-chi-squared (NIX) distribution — our first truly bivariate distribution. It has four hyperparameters: (prior mean), (mean confidence, in pseudo-observations), (variance degrees of freedom), and (prior variance scale).

The coupling is crucial: the uncertainty in the mean is proportional to the variance. When is large (we are uncertain about individual observations), we are also more uncertain about the mean. This dependence structure is what makes the NIX prior jointly conjugate.

Source

torch.manual_seed(305)

μ0 = torch.tensor(0.)

κ0 = torch.tensor(1.0)

ν0 = torch.tensor(2.0)

σ2_0 = torch.tensor(2.0)

def sample_nix(μ0, κ0, ν0, σ2_0, n):

σ2 = ScaledInvChiSq(ν0, σ2_0).sample(sample_shape=(n,))

μ = Normal(μ0, torch.sqrt(σ2 / κ0)).sample()

return μ, σ2

# Evaluate the joint density on a grid

μ_grid, σ2_grid = torch.meshgrid(

torch.linspace(-5, 5, 60),

torch.linspace(1e-3, 10, 60),

indexing='ij',

)

log_prob = ScaledInvChiSq(ν0, σ2_0).log_prob(σ2_grid)

log_prob += Normal(μ0, torch.sqrt(σ2_grid / κ0)).log_prob(μ_grid)

fig, ax = plt.subplots()

cf = ax.contourf(μ_grid, σ2_grid, torch.exp(log_prob), 25)

μ_samp, σ2_samp = sample_nix(μ0, κ0, ν0, σ2_0, 25)

ax.scatter(μ_samp.numpy(), σ2_samp.numpy(),

c='w', edgecolors='k', s=40, zorder=5)

ax.set_xlim(-5, 5)

ax.set_ylim(0, 10)

ax.set_xlabel(r"$\mu$")

ax.set_ylabel(r"$\sigma^2$")

ax.set_title(r"$\mathrm{{NIX}}(\mu_0={:.0f},\,\kappa_0={:.0f},\,\nu_0={:.0f},\,\sigma_0^2={:.0f})$".format(

μ0, κ0, ν0, σ2_0))

plt.colorbar(cf, ax=ax, label=r"$p(\mu, \sigma^2)$")

plt.tight_layout()

Posterior Update¶

With i.i.d. Gaussian observations , the posterior under the NIX prior is again NIX:

Just as in the known-variance case, is a weighted average of the prior mean and the sample mean. The posterior variance scale can be rewritten as , which combines the prior sum of squares with the data sum of squares, adjusted for the updated mean.

In the uninformative limit , , the posterior parameters become , , , and .

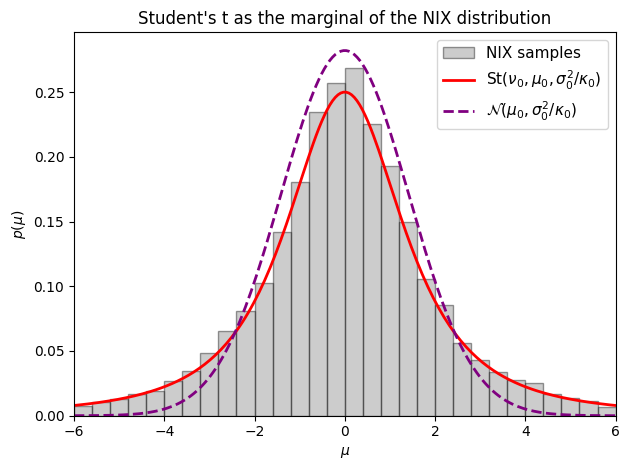

Posterior Marginals: Student’s t Distribution¶

What are the marginal distributions of and under the NIX posterior? The marginal of is straightforward — since is NIX, its marginal is .

The marginal of is more interesting. We integrate out :

where denotes the Student’s t distribution with degrees of freedom, location , and scale , with density

Its mean is and its variance is for .

The Student’s t arises here because we are averaging a Gaussian over a random variance drawn from an inverse chi-squared distribution. When is unknown and drawn from a broad distribution, the marginal distribution of has heavier tails than any single Gaussian — the extra uncertainty about the scale inflates the probability of large deviations. As , the Student’s t converges to a Gaussian.

Source

torch.manual_seed(305)

μ0 = torch.tensor(0.)

κ0 = torch.tensor(1.0)

ν0 = torch.tensor(2.0)

σ2_0 = torch.tensor(2.0)

# Analytical marginal p(μ) = St(ν0, μ0, σ2_0/κ0)

t_dist = StudentT(ν0, μ0, torch.sqrt(σ2_0 / κ0))

# Empirical samples from the NIX prior

σ2_samp = ScaledInvChiSq(ν0, σ2_0).sample(sample_shape=(10000,))

μ_samp = Normal(μ0, torch.sqrt(σ2_samp / κ0)).sample()

μ_grid = torch.linspace(-6, 6, 200)

bins = torch.linspace(-6, 6, 31)

fig, ax = plt.subplots()

ax.hist(μ_samp.numpy(), bins.numpy(), density=True,

alpha=0.4, color='gray', ec='k', label='NIX samples')

ax.plot(μ_grid, torch.exp(t_dist.log_prob(μ_grid)),

'-r', lw=2, label=r"$\mathrm{St}(\nu_0, \mu_0, \sigma_0^2/\kappa_0)$")

ax.plot(μ_grid,

torch.exp(Normal(μ0, torch.sqrt(σ2_0 / κ0)).log_prob(μ_grid)),

'--', color='purple', lw=2,

label=r"$\mathcal{N}(\mu_0, \sigma_0^2/\kappa_0)$")

ax.legend(fontsize=11)

ax.set_xlim(-6, 6)

ax.set_xlabel(r"$\mu$")

ax.set_ylabel(r"$p(\mu)$")

ax.set_title("Student's t as the marginal of the NIX distribution")

plt.tight_layout()

Posterior Credible Intervals¶

One of the main uses of the posterior is to construct credible intervals — Bayesian analogs of confidence intervals that have a direct probabilistic interpretation: is a credible interval if .

Under an uninformative prior, the posterior marginal is , and the central credible interval is

where is the quantile function of the Student’s t distribution.

Equivalently, note that the standardized quantity

so testing whether a hypothesized value lies in is equivalent to checking whether the test statistic satisfies .

This is structurally identical to the frequentist one-sample t-test, but with a different interpretation: instead of asking “how often would this test reject under repeated sampling?”, we ask “given the data we observed, how probable is it that lies outside this interval?” The Bayesian and frequentist answers happen to coincide numerically here (under the uninformative prior), but they represent fundamentally different epistemic claims.

Conclusion¶

This chapter developed Bayesian inference for the scalar Gaussian distribution from the ground up. The key takeaways are:

Conjugate priors make posterior inference tractable: the prior and posterior belong to the same family, so updating reduces to a simple update of hyperparameters.

The normal–inverse- prior is the natural conjugate for a Gaussian model with unknown mean and variance. Its two shape parameters and can be interpreted as pseudo-observations informing the variance and mean estimates respectively.

The Student’s distribution emerges naturally as the marginal distribution of the mean after integrating out the unknown variance — heavier tails reflect our additional uncertainty about the scale.

Bayesian credible intervals have a direct probabilistic interpretation that differs conceptually from frequentist confidence intervals, even when the two coincide numerically under uninformative priors.

- Bishop, C. M. (2006). Pattern recognition and machine learning. Springer.

- Murphy, K. P. (2023). Probabilistic Machine Learning: Advanced Topics. MIT Press. https://probml.github.io/pml-book/book2.html